The Dawn of Algorithmic Warfare: Israel's AI Edge

In an era where geopolitical tensions simmer beneath the surface of global discourse, reports have emerged suggesting that Israel has developed and potentially deployed a highly advanced Artificial Intelligence platform aimed at precision targeting of Iranian leaders. This alleged development marks a significant, and potentially unsettling, evolution in modern warfare, pushing the boundaries of strategic conflict into the realm of algorithmic decision-making and lethal expertise. While specific details remain shrouded in the secrecy typical of national security operations, the implications of such a system are profound, reshaping our understanding of statecraft, ethics, and the future of conflict.

The concept of AI-driven warfare has long been a subject of science fiction and ethical debate. However, these reports indicate that such capabilities are no longer hypothetical but are actively being integrated into real-world military strategies. For Israel, a nation perennially navigating complex security challenges, leveraging cutting-edge technology like AI offers a perceived advantage in a volatile region. For Iran, such a platform represents an unprecedented threat, introducing a new layer of precision and analytical depth to intelligence-gathering and potential neutralization operations.

Understanding the Alleged AI Platform's Capabilities

While official confirmations are, understandably, non-existent, intelligence community whispers and expert analyses point towards an AI system capable of intricate data synthesis and predictive modeling. This platform is not merely an automated drone control system; it is envisioned as a strategic tool that can:

- Process Vast Data Sets: Analyzing immense volumes of intelligence, from open-source information and satellite imagery to intercepted communications and human intelligence reports. This includes behavioral patterns, travel itineraries, social networks, and vulnerabilities of high-value targets.

- Identify Patterns and Anomalies: Moving beyond human cognitive limitations, the AI can detect subtle patterns and anomalies in data that might indicate an opportunity for a strike or an emerging threat.

- Predictive Analytics: Generating probabilistic forecasts of target movements, meeting schedules, and operational vulnerabilities, allowing for optimal timing and method of intervention.

- Risk Assessment: Evaluating potential collateral damage, operational risks, and geopolitical fallout of various intervention scenarios, presenting decision-makers with a comprehensive risk-reward matrix.

- Adaptive Learning: Continuously learning from new data, outcomes of past operations, and evolving enemy tactics, thereby refining its models and improving its targeting efficacy over time.

This level of autonomous data processing and recommendation-generation implies a system designed to reduce the ‘fog of war’ and empower human operators with unprecedented clarity and options. The emphasis here is on 'lethal expertise,' suggesting that the AI not only identifies targets but also assists in crafting precise, effective, and perhaps non-conventional engagement strategies.

Ethical Quagmires and Legal Labyrinth

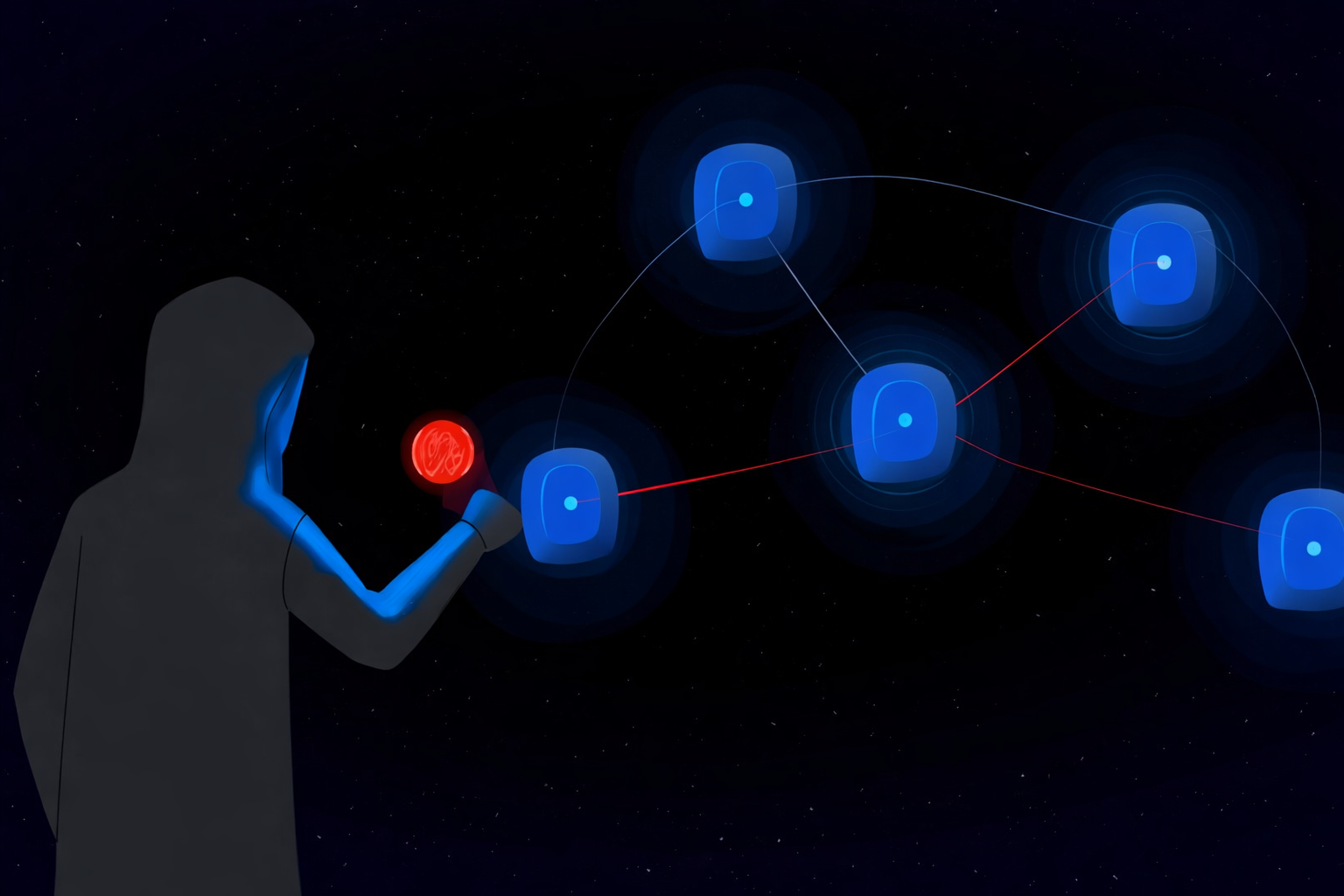

The deployment of an AI platform with such capabilities immediately thrusts the global community into a complex ethical and legal debate. The central question revolves around accountability and human control. If an autonomous system identifies a target and recommends a lethal action, where does the ultimate moral and legal responsibility lie? Is it with the programmers, the commanders who authorized its use, or the operators who pull the trigger (or approve the automated action)?

International humanitarian law, designed for human combatants, struggles to fully encompass the nuances of AI in warfare. Concepts like distinction, proportionality, and precaution become incredibly difficult to apply when an algorithm is heavily involved in decision-making. Critics argue that such systems dehumanize warfare, removing the human element of empathy and discretion, potentially leading to faster escalations and less restraint in conflict. Furthermore, the risk of algorithmic bias, where historical data might inadvertently lead the AI to unfair or inaccurate conclusions, poses a severe threat to legitimate targeting.

Moreover, the concept of "human in the loop" versus "human on the loop" is critical. A human "in the loop" maintains direct control over each decision, while a human "on the loop" supervises but allows the AI to make decisions unless interrupted. The alleged nature of Israel's platform suggests a high degree of autonomy, making the distinction paramount. Nations worldwide are grappling with how to regulate such technology. For instance, the discussion around India's new AI law highlights the global push to define boundaries for AI, even if primarily focused on content moderation, the principles of ethical AI governance apply across sectors, including defense.

Geopolitical Ripple Effects

The advent of such an AI platform fundamentally alters the delicate balance of power in the Middle East and beyond. For Iran, it necessitates a recalibration of security protocols and a potential acceleration of its own defensive and offensive AI capabilities. This could ignite an "AI arms race" where nations scramble to acquire or develop similar technologies, leading to increased global instability.

The use of AI for precision targeting also raises the specter of reduced transparency and increased deniability in covert operations. Attribution becomes harder, and the lines between conventional warfare, clandestine operations, and cyber warfare blur further. This technological leap could allow for "surgical strikes" with potentially fewer human footprints, thereby minimizing direct confrontation while maximizing strategic impact.

The international community will likely respond with a mixture of concern and a pragmatic drive to understand and potentially replicate such capabilities. The United States, Russia, China, and other major powers are heavily invested in military AI research, and this development will undoubtedly fuel their own efforts. The broader implications for global cybersecurity also come to the fore. A highly sophisticated AI platform becomes a prime target for adversarial cyberattacks, not just to neutralize its capabilities but also to manipulate its outputs or steal its underlying technology. Concerns about AI backdoor sleeper agents or vulnerabilities are not just theoretical in the civilian sphere; they become critical national security issues when applied to lethal autonomous systems. A robust cybersecurity posture is essential for any nation deploying such advanced tools, as highlighted by broader discussions around cybersecurity in the age of AI disruption.

The Human Element: Still Indispensable?

Despite the advanced capabilities of such an AI platform, it is crucial to remember that AI is a tool. It processes information and makes recommendations based on its programming and data. The ultimate decision to act, especially in matters of life and death, theoretically remains with human commanders. However, the sheer volume and complexity of data processed by AI could create a dependency, where human decision-makers rely so heavily on the AI's analysis that they become less critical or even overwhelmed, accepting its conclusions without sufficient independent scrutiny.

This dynamic introduces a new kind of risk: human fallibility amplified by algorithmic certainty. The speed at which an AI can analyze and recommend could also compress decision cycles, leaving less time for careful deliberation and increasing the potential for hasty actions based on imperfect algorithmic understanding. Therefore, rigorous training for human operators, robust ethical guidelines, and fail-safe mechanisms become more important than ever.

Conclusion: A New Chapter in Conflict

The alleged deployment of Israel's AI platform for targeting Iranian leaders heralds a new, complex chapter in international relations and warfare. It underscores the undeniable momentum of Artificial Intelligence in military applications, promising unprecedented precision and strategic advantage, but simultaneously raising profound ethical, legal, and geopolitical questions that the global community is ill-prepared to answer. As nations continue to invest heavily in AI, the imperative to establish clear international norms, robust regulatory frameworks, and a shared understanding of accountability for autonomous weapons systems has never been more urgent. The future of conflict may well be decided not just on battlefields, but in the intricate algorithms that power tomorrow's war machines.

Suggested Articles

General

General

Intel Boosts SambaNova Investment Amidst AI Chip Race

Intel is reportedly planning a significant new investment in AI startup SambaNova Systems, signaling continued confid...

Read Article arrow_forward General

General

Microsoft VP Jha: AI-Driven Layoffs Boost Global Economy

Microsoft VP Rajesh Jha argues that AI-driven workforce reductions, even significant ones, will lead to increased pro...

Read Article arrow_forward General

General

Russia & Iran: Satellite Imagery & Drone Tech Alliance Deepens

Exclusive insights into the deepening military and technological cooperation between Russia and Iran, focusing on sat...

Read Article arrow_forward General

General

AI and India's National Security: A Dual-Edged Sword

Exploring the transformative impact of Artificial Intelligence on India’s national security, from enhancing defense c...

Read Article arrow_forward